Why Healthcare Needs AI Agents: Connecting FHIR, MCP, and A2A

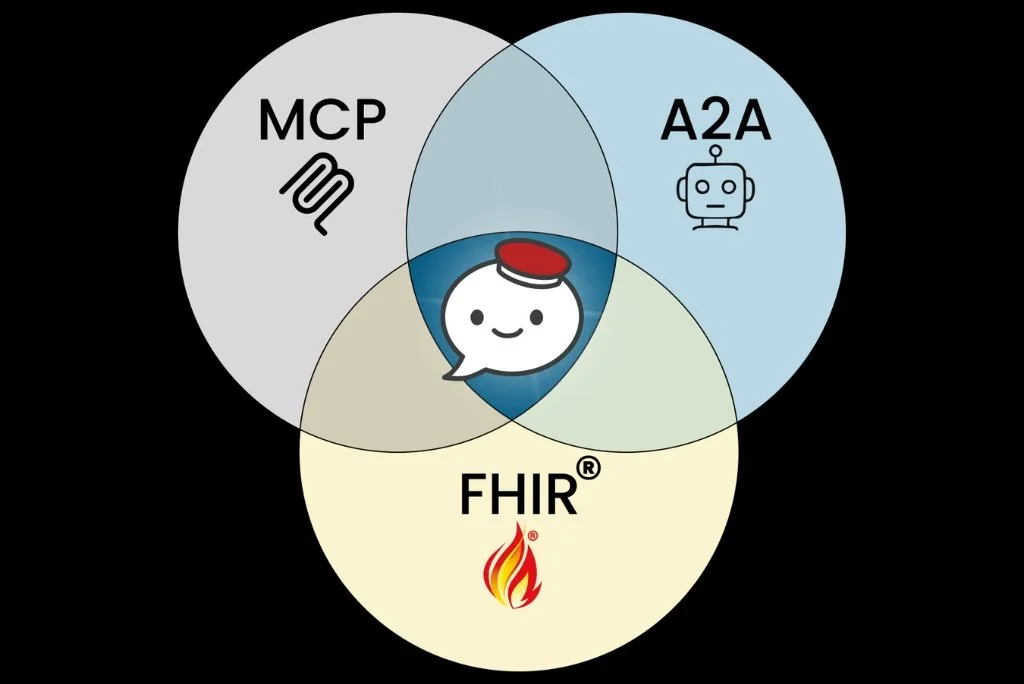

AI agents go beyond chat. They read EHR data, trigger workflows, and collaborate across systems. Powered by MCP, A2A, and FHIR, they represent a new operational layer in healthcare. This article breaks down how these technologies work together to enable real-world clinical applications.

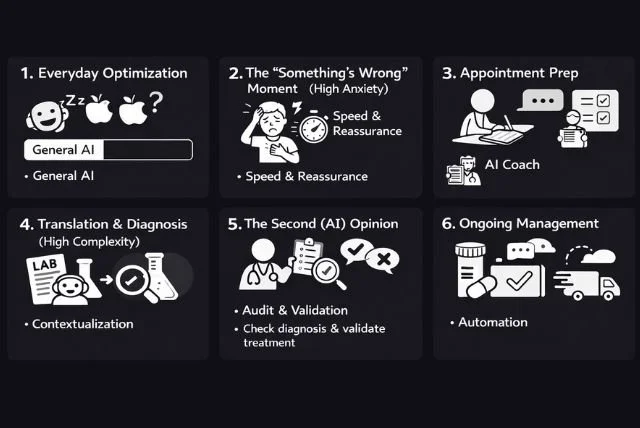

AI for Patients: Moments of Care

Patients do not think about healthcare AI. They turn to it during critical Moments of Care. From everyday optimization to second opinions and chronic disease management, AI’s role shifts with urgency, complexity, and risk. The true divide will not be features, but responsibility. Which patient moments will become AI-native first?

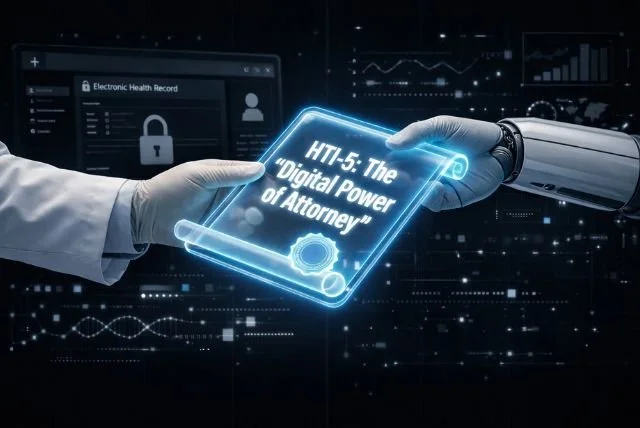

Bots are Users Too: Why HTI-5 Changes Everything

HTI-5 marks a major shift in healthcare interoperability by redefining access to explicitly include AI agents and RPA. By requiring “analogous” access and narrowing the Infeasibility Exception, the rule makes it harder to block automated workflows and third-party write access. AI agents are no longer just tools. They are becoming lawful representatives within clinical systems.

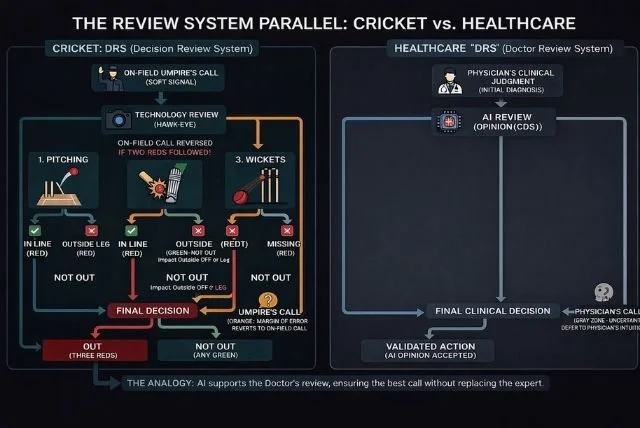

AI in Healthcare: Clinical DRS

Healthcare AI has mastered the “run-outs” of admin and revenue workflows. But medicine is full of “LBW moments,” complex, borderline decisions that require judgment. Like cricket’s Decision Review System, AI should process data, highlight uncertainty, and reduce cognitive load, while ultimately deferring to physician expertise in the Orange Zone.

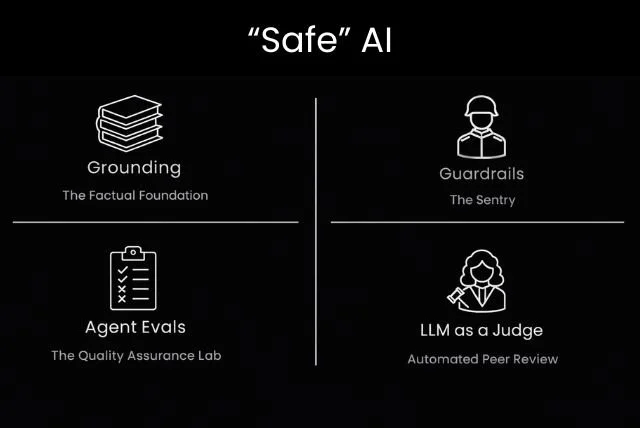

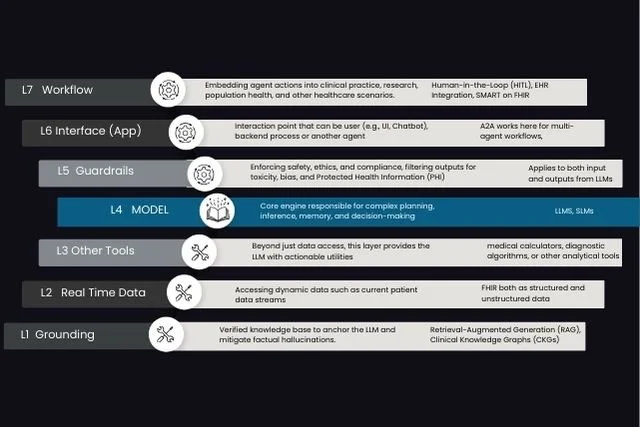

“Safe” AI Agents: The Determinism Inside Non-Determinism

Generative AI thrives on non-determinism, but that flexibility introduces risk. Safe AI is not about removing creativity. It is about layering safeguards. Grounding reduces hallucinations, guardrails enforce runtime rules, evals validate behavior before production, and LLM-as-a-Judge adds scalable oversight. Determinism is not removed. It is engineered around uncertainty.

Guardrails for AI Agents: A Mask Analogy for Safe AI

AI agents require guardrails to operate safely within ethical, legal, and operational boundaries. Using a mask analogy, this article explains the difference between output guardrails that protect users from unsafe responses and input guardrails that protect systems from adversarial prompts or sensitive data exposure. Safe AI is built through layered design, not a single filter.

An “Open” Agent Framework: Building AI That Avoids the EHR Trap

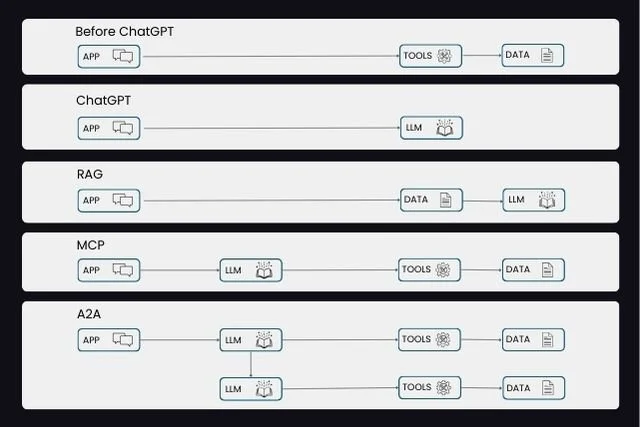

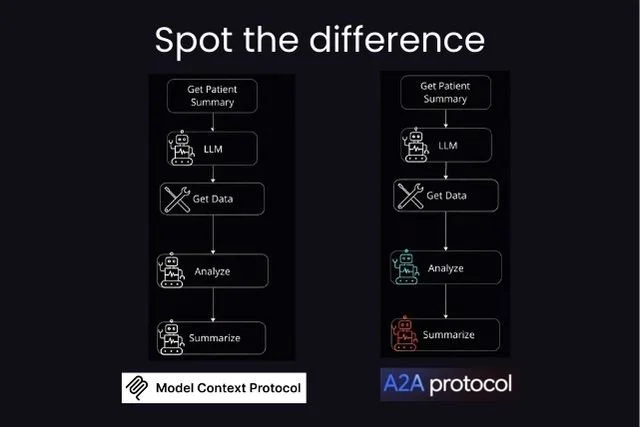

How do we avoid repeating the EHR era mistakes in healthcare AI? The answer lies in building open-by-design agent frameworks. With standards like MCP, MCP-UI, and A2A, AI systems can become modular, interoperable, and collaborative. Openness is not just about open-source. It is about architectural flexibility that enables innovation at scale.

Guardrails for AI Agents: Designing Safety in a Non-Deterministic World

AI agents are inherently non-deterministic, which makes guardrails essential. Input guardrails protect systems from adversarial prompts and sensitive data exposure, while output guardrails validate responses before delivery. Built with deterministic rules, reusable modules, and multi-agent oversight, guardrails transform experimental AI into production-ready systems.

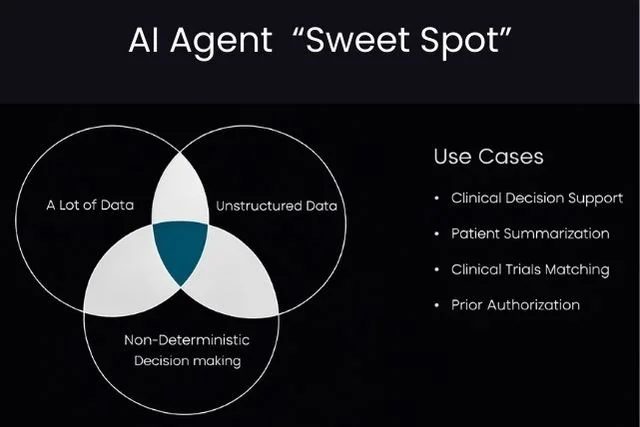

When Do You Really Need AI Agents?

AI agents should not be built because they are trendy. They should be built where they create leverage. In healthcare, that happens at the intersection of massive data, unstructured information, and non-deterministic clinical decisions. This is the Agent Sweet Spot, where LLMs can augment human judgment at scale.

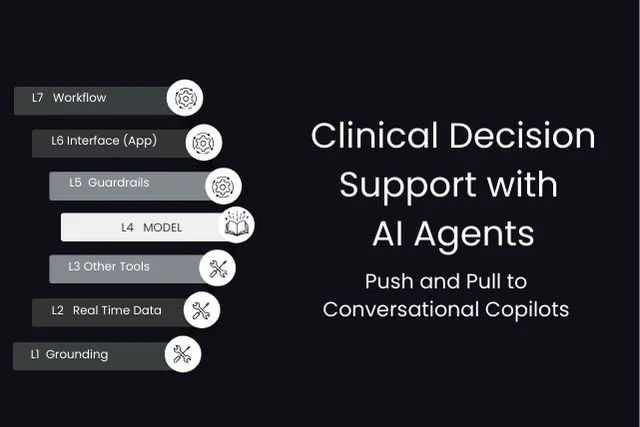

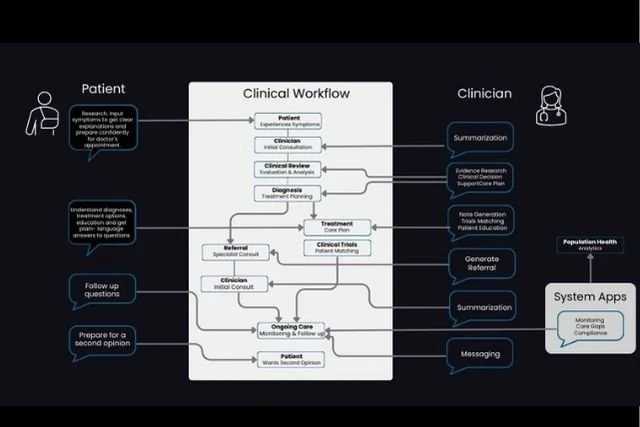

Clinical Decision Support with AI Agents

Traditional CDS relies on push alerts and pull-based searches. AI agents redefine this model by creating conversational clinical copilots that operate proactively and reactively within workflows. These layered systems synthesize data, surface insights, and make recommendations actionable, transforming CDS into continuous, context-aware decision support.

Evaluating AI in Healthcare: Beyond the LLM

LLM benchmarks are not enough to evaluate AI in healthcare. Real-world systems operate through apps and agents across multiple layers. Effective evaluation must include task-level outcome testing and component-level process audits, including tool usage, grounding, safety guardrails, and multi-agent orchestration.

Decoding AI Agents in Healthcare: The 7-Layer Stack

An AI agent is not just a large language model. It is a layered system that integrates grounding, real-time data, tools, guardrails, interface design, and workflow integration. This 7-layer stack demystifies how healthcare AI agents operate and how standards like MCP and Agent-to-Agent enable scalable deployment.

Generative AI in Clinical Workflows

Ambient documentation is only the beginning of Generative AI in healthcare. Real value lies across clinical decision support, patient engagement, trial matching, and population health. While specialized tools will thrive, consolidation around shared AI infrastructure will shape the future of clinical workflows.

From Chatbots to Autonomous Agents

Healthcare AI has moved from menu-driven EHR screens to conversational chatbots, and now to autonomous agents. With RAG grounding, MCP tool invocation, A2A collaboration, and FHIR integration, AI systems are becoming workflow-aware and actionable. This evolution marks the shift from conversation to embedded clinical decision support.

MCP vs Multi-Agent vs A2A

MCP, multi-agent AI, and A2A are often confused but serve distinct roles. MCP enables tool access for a single agent. Multi-agent systems introduce specialization within a platform. A2A enables structured communication between independent agents. Together, they define the future of collaborative healthcare AI systems.

Workflow vs Agent: Cutting Through the AI Semantics

An AI app that uses tools is not necessarily agentic. True agentic AI can reason about goals, act autonomously, and operate within a self-correcting decision loop. In healthcare, we are more likely to see powerful AI workflows before fully autonomous clinical agents. The focus should be on responsible augmentation, not semantics.

Why Won’t Epic Just Build It?

Healthcare AI founders constantly hear the question: Why won’t Epic just build this? With OpenAI entering enterprise healthcare, the tension is real. But the answer isn’t build versus buy. It lies in platform strategy, interoperability, and identifying the right intersection between AI capability and clinical workflow opportunity.

RPA vs Agentic AI: It’s Not About “Can It Think?”

RPA follows instructions. Agentic AI pursues goals. In healthcare, that distinction changes everything. While RPA excels at predictable, rule-based workflows, agentic AI adapts to context, understands intent, and iterates toward outcomes. The future of automation lies in knowing when to use each.

Complex Concepts, Simple Trios

Healthcare AI may be complex, but clarity emerges in threes. This structured framework explores the core strengths of LLMs, essential concepts like context and tools, adoption drivers, enabling technologies such as FHIR and MCP, and transformative use cases including summarization and clinical decision support.

SMART on FHIR: We Built the App Store Rails. So Where’s the App Economy?

SMART on FHIR was designed to power an App Store for Health. The standards are mandated, the APIs exist, and regulatory support is in place. Yet a true substitutable app ecosystem has not fully emerged. What slowed it down, and why might now be the turning point?