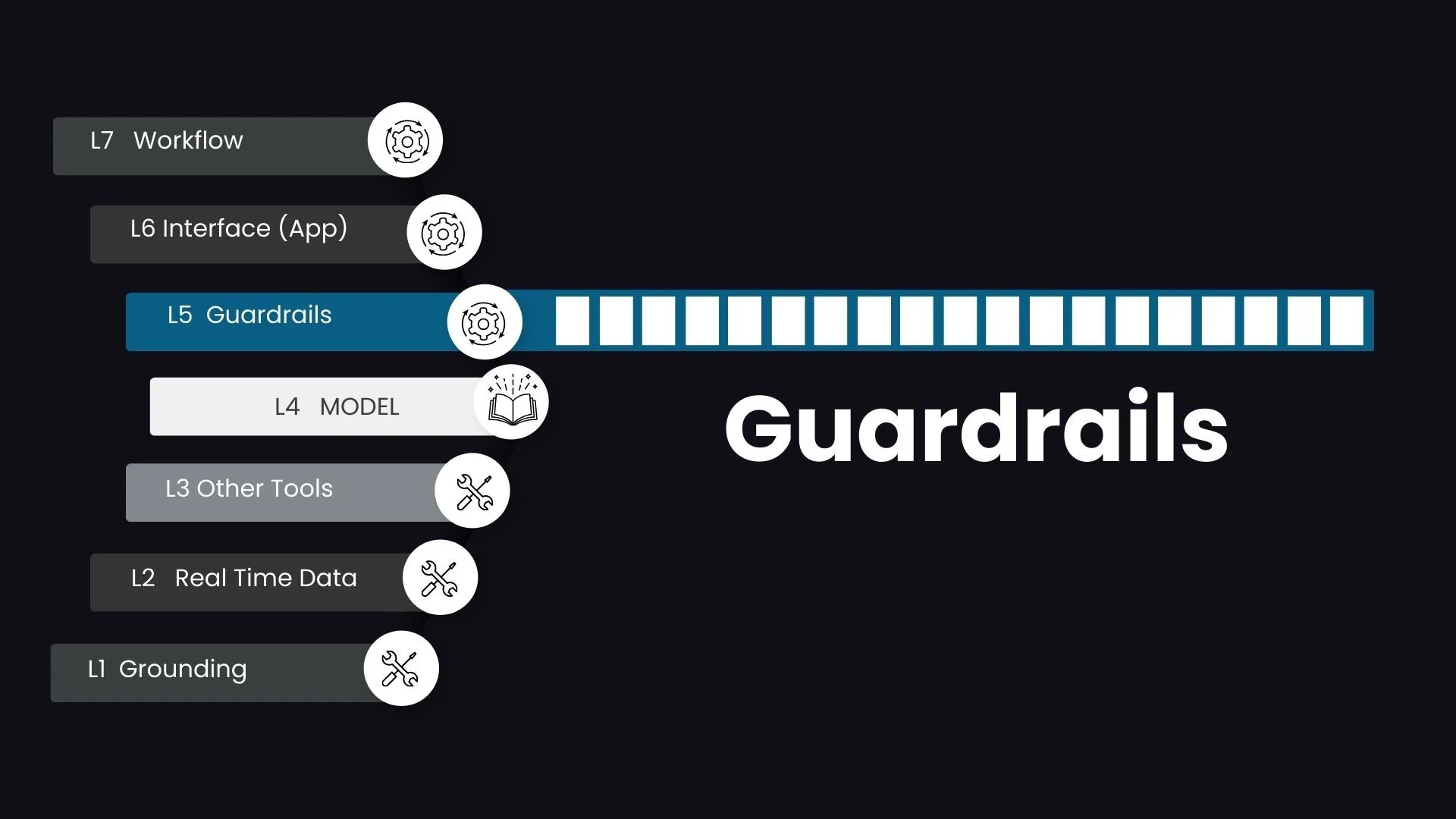

Guardrails for AI Agents: Designing Safety in a Non-Deterministic World

As AI agents become more powerful and autonomous, guardrails are what make them trustworthy. They sit at the intersection of capability and control, ensuring that intelligent systems operate safely in real-world environments.

In high-stakes domains such as healthcare, finance, or legal workflows, guardrails are not optional. They are foundational.

What Is an AI Guardrail?

In the simplest terms, an AI guardrail is a programmatic mechanism designed to ensure that an agent operates within defined ethical, legal, and operational boundaries.

Large language models are inherently non-deterministic. They generate responses probabilistically, which makes them flexible and powerful, but also unpredictable. Guardrails act as the safety net that constrains this unpredictability. They do not remove non-determinism, but they shape it within acceptable limits.

Guardrails are what transform an experimental AI system into a production-ready one.

Safety Around What?

Guardrails can be broadly categorized into two main types based on where they intercept the conversational flow: input guardrails and output guardrails.

1. Input Guardrails - Prompt to LLM

Input guardrails operate on the user’s prompt before it reaches the LLM. They serve a dual role and are often the first line of defense in a production system.

Protecting the System from Bad Actors

Input guardrails detect and mitigate adversarial inputs such as prompt injection or jailbreaking attempts that are designed to override the agent’s default instructions. Without this layer, a cleverly crafted prompt can manipulate the system into unsafe or non-compliant behavior.

Protecting the User from Accidental Exposure

Input guardrails also protect users. For example, they can detect and redact protected health information or other sensitive data that a user might accidentally paste into the system. This is particularly important when interacting with external or public LLMs. By intercepting and transforming the prompt before it is transmitted, the system reduces compliance and privacy risks.

If output guardrails focus on what the agent says, input guardrails focus on what the agent is allowed to hear.

2. Output Guardrails - LLM to User

Output guardrails act as the final checkpoint before the agent’s response is delivered to the user.

The most common use case is reducing hallucinations or unsupported claims. However, their scope is broader. Output guardrails ensure that the response is safe, appropriate, and aligned with organizational policy or brand tone.

For example, consider an agent responding to a patient in a healthcare setting. Beyond factual correctness, the system must ensure that the tone is empathetic, that no unsafe medical advice is delivered outside permitted boundaries, and that messaging aligns with institutional guidelines.

Output guardrails provide that last layer of validation in an otherwise probabilistic system.

How Are Guardrails Implemented?

Guardrails are not a single product or feature. They represent a multi-layered architectural strategy that can be implemented in several ways.

Deterministic Business Rules

Simple but powerful rules can be embedded directly into application logic. These may include regex filters for specific keywords, character limits, schema validation, structured output enforcement, or hard-coded policy constraints. Deterministic rules introduce predictability into the system.

Reusable APIs or Modules

Guardrail logic can be abstracted into internal services or third-party APIs. This approach makes them reusable across multiple agents without tightly coupling them to any single agent’s reasoning logic. For example, a centralized PHI detection service can serve multiple agent workflows.

Agents as Guardrails in Multi-Agent Frameworks

In more advanced architectures, agents themselves can function as guardrails. A smaller, internally hosted model can act as an input guardrail that reliably detects and blocks PHI before forwarding a scrubbed prompt to an external LLM. Similarly, a validation agent can review outputs before they are released to users.

This layered approach enables scalable, modular safety enforcement.

What Happens When a Guardrail Is Triggered?

When a guardrail is triggered, the system must respond in a predictable and controlled manner. The outcome depends on the design and severity of the trigger.

Common responses include:

Immediate termination of the request with a predefined message such as “This request does not follow usage guidelines.”

Blocking or rewriting the input or output before proceeding

Escalation to a human-in-the-loop workflow, where control is gracefully handed off to a human operator

Designing these response pathways is just as important as detecting violations. Safety is not only about prevention. It is also about controlled recovery.

Guardrails as First-Class Citizens

Guardrails should not be an afterthought layered on top of an agent. They should be treated as first-class architectural components within the agent platform.

Robust guardrail design is what enables AI systems to move from experimentation to enterprise deployment. I would be interested to hear how others are addressing the non-deterministic nature of LLMs and what safety architectures have proven effective in real-world deployments.