“Safe” AI Agents: The Determinism Inside Non-Determinism

The Paradox of Non-Determinism

The core strength of generative AI is its ability to generate creative, context-aware, non-scripted responses. That flexibility is what makes large language models powerful. They can reason across domains, adapt to tone, and synthesize information in ways traditional rule-based systems never could.

But that same non-determinism introduces risk. Models can hallucinate facts, misinterpret intent, overgeneralize, or drift outside safe operational boundaries.

The goal of “safety” is not to remove non-determinism. If we remove it entirely, we lose the very capability that makes these systems valuable. The goal is to balance it.

We want systems that are imaginative yet reliable. Adaptive yet accountable. Flexible yet grounded.

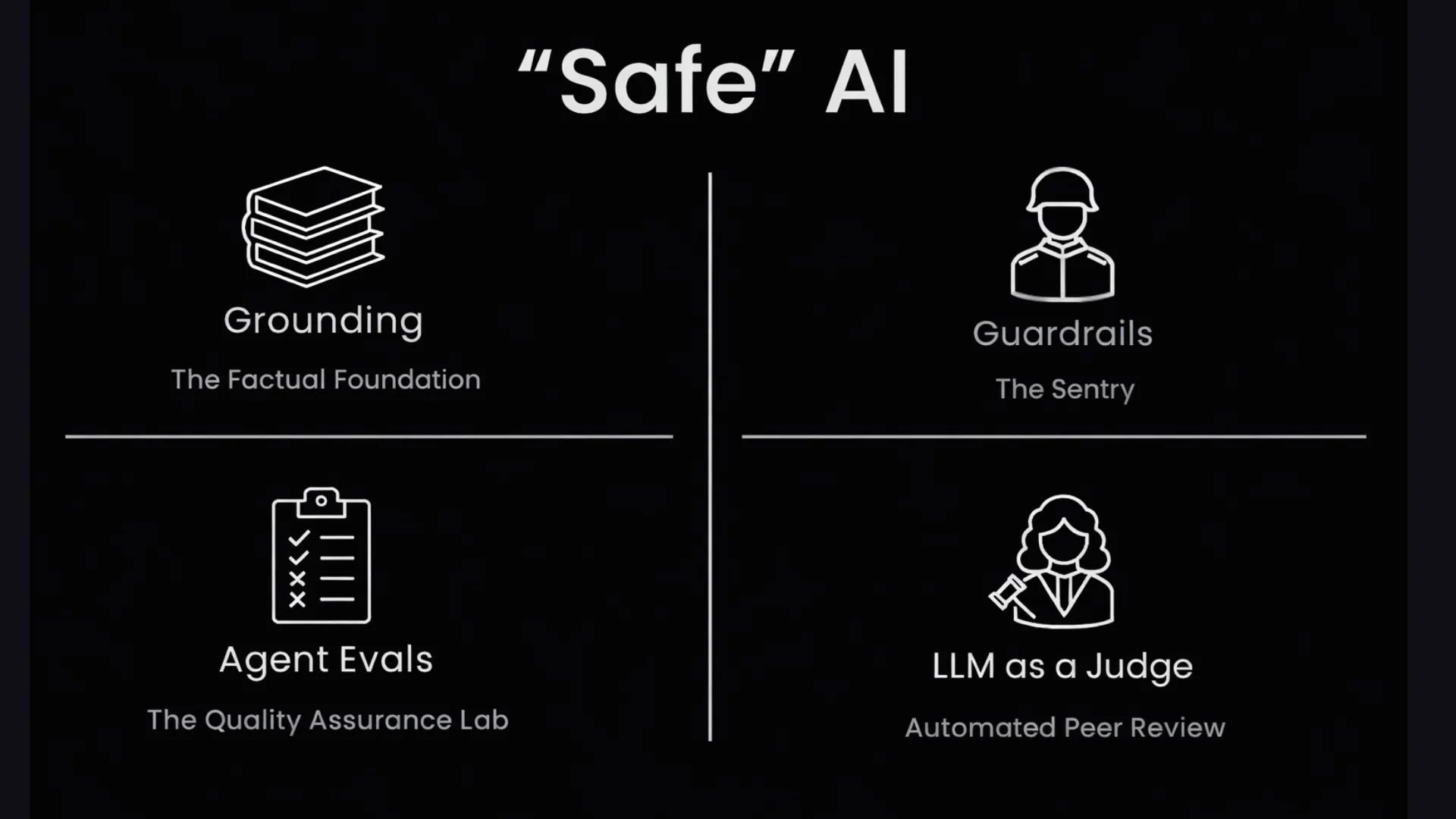

Below are four technical approaches, among many others, that help introduce determinism into non-deterministic systems and move us closer to truly Safe AI.

1. Grounding: The Factual Foundation

Grounding ensures that outputs are based on verifiable data rather than free-form generation alone. By connecting an LLM to trusted sources such as clinical guidelines, structured databases, enterprise knowledge bases, or approved documents, we reduce hallucinations and constrain the model’s reasoning space.

This is most commonly implemented through Retrieval-Augmented Generation (RAG), with or without Model Context Protocol (MCP).

In a grounded architecture, the LLM behaves less like a pure creator and more like a highly capable synthesizer. It retrieves relevant context first and then generates a response anchored in that information. The answer is no longer floating in model memory alone. It is tethered to a defined source of truth.

Grounding introduces epistemic discipline. It narrows the universe of possible answers to those supported by data.

2. Guardrails: The Active Sentry

Grounding provides the data. Guardrails define the rules.

Guardrails are active mechanisms that monitor inputs and outputs during runtime. They are always watching the conversation flow and intervening when necessary. These controls can take several forms:

Deterministic rules based on policy or compliance requirements

Reusable validation APIs

Classification layers that detect unsafe or irrelevant content

Mini-agents in multi-agent systems that filter or moderate outputs

Guardrails function as real-time active brakes. If an agent veers into non-compliant, unsafe, or irrelevant territory, guardrails step in. They either block, rewrite, escalate, or redirect the response.

While grounding constrains what the model knows, guardrails constrain how it behaves.

3. AI Evals: The Quality Assurance Lab

Evals validate an agent’s behavior before it ever interacts with a user. In a non-deterministic world, testing cannot rely on a handful of static test cases. It must be systematic and repeatable.

Evals can operate at multiple levels:

Task-level or black-box testing, where we evaluate end-to-end behavior

Component-level or white-box testing, where individual modules or reasoning steps are tested

A suite of curated test cases, prompts, edge cases, and adversarial scenarios evaluates performance, factual accuracy, safety adherence, and robustness. The goal is to discover flaws in the lab rather than in production.

In many ways, evals represent test-driven development for generative systems. They provide measurable benchmarks for systems that do not always produce the same output twice.

Without evals, safety becomes anecdotal. With evals, it becomes quantifiable.

4. LLM as a Judge: Automated Peer Review

One of the most scalable approaches to safety is to have an agent evaluate another agent.

In this pattern, a separate model with a carefully designed evaluation prompt assesses the primary agent’s output for quality, safety, alignment, and completeness. It acts as an automated peer reviewer.

This “agent of an agent” model enables:

Scalable review at high volume

Consistent evaluation criteria

Layered validation inside multi-agent architectures

LLM-as-a-Judge can be deployed as part of guardrails, as part of eval pipelines, or as a post-generation validation layer before responses are delivered to users.

It introduces reflective oversight into the system. While not perfect, it adds an additional probabilistic checkpoint that significantly reduces risk.

Layering Safety: Determinism by Design

These four approaches are not mutually exclusive. In fact, a truly Safe AI system layers them.

Grounding makes agents more truthful.

Guardrails make them more trustworthy.

Evals make them measurable.

LLM-as-a-Judge makes them scalable and self-reflective.

Safety in generative AI is not about eliminating uncertainty. It is about architecting structured constraints around uncertainty.

At Prompt Opinion, these are the technical approaches we have been focusing on while designing agentic systems that must operate in high-stakes environments.

What other safety mechanisms or frameworks are you finding effective in production systems?