AI in Healthcare: Clinical DRS

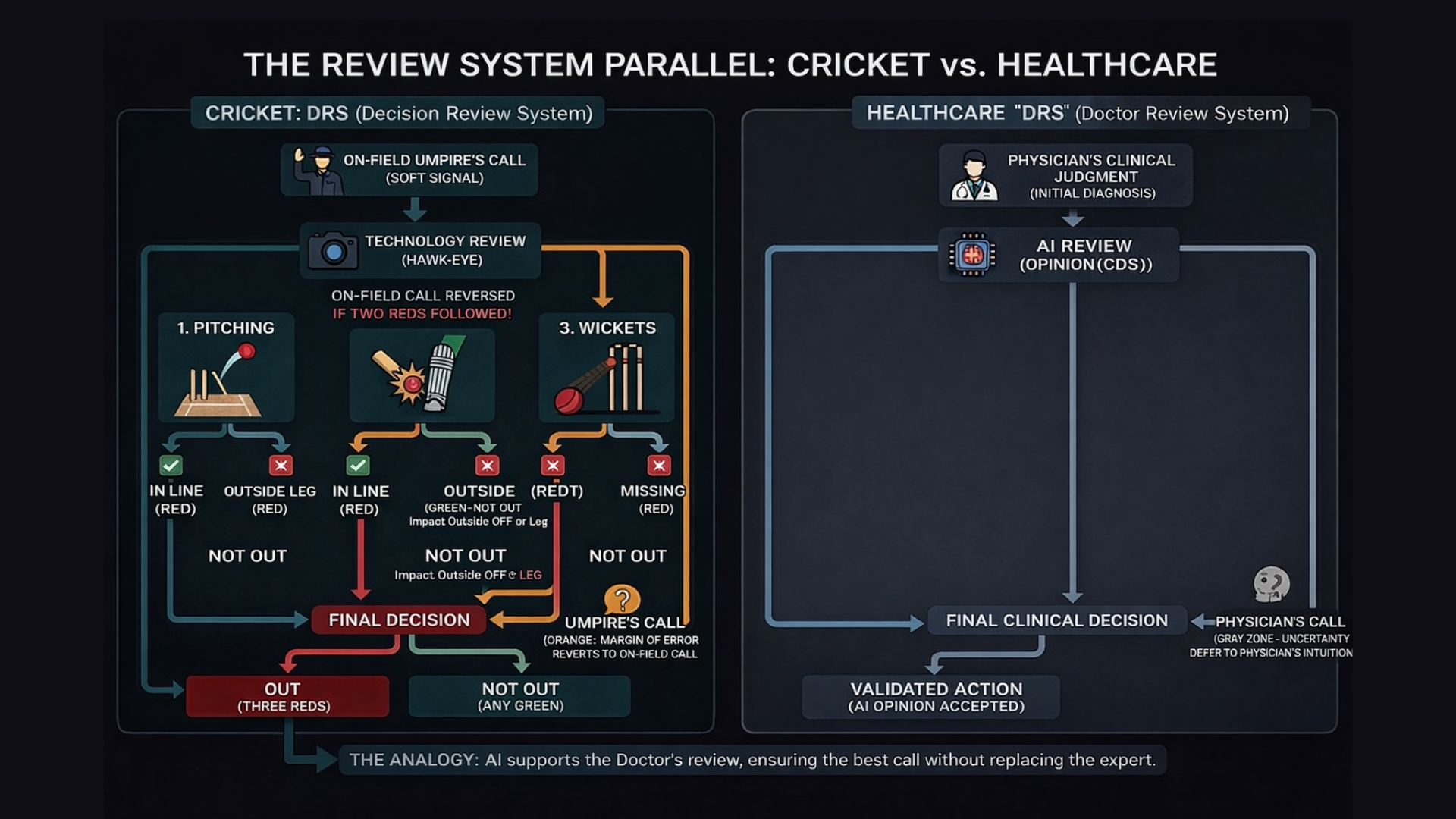

For any sports fan, there is nothing more frustrating than a game decided by a clearly wrong call. In cricket, we lived with that frustration for decades until the arrival of the Decision Review System (DRS). DRS introduced technology into decision-making, but it was never meant to replace the umpire. It was built to support them.

The earliest use of DRS focused on line decisions, situations that are black and white, like a run-out. Either the bat crossed the line in time or it did not. Technology brought clarity, reduced controversy, and delivered immediate value.

But the real test of DRS came with LBW, Leg Before Wicket.

LBW is not a simple line call. It is a prediction based on multiple variables, and the decision depends on three criteria:

Pitching: Did the ball land in the valid zone? If it pitched outside leg, the review ends immediately.

Impact: Did it hit the pad in line with the stumps? There is nuance here, especially if the batter did not offer a shot.

Wickets: Was the ball actually going to hit the stumps?

The ball-tracking technology behind this is extraordinary. Yet the most important feature of DRS is not its sophistication. It is its humility.

Even with advanced tracking, the system acknowledges a margin of error. If the ball is only marginally clipping the stump, the screen flashes orange for "Umpire’s Call," and the on-field decision stands.

The technology defers to the human’s better vantage point.

That principle is the perfect analogy for where AI in healthcare stands today.

The Safe Zone: Administrative and Revenue Workflows

In 2025, we saw tremendous growth in AI across revenue cycle management, coding, documentation, and administrative workflows. These use cases resemble line decisions in cricket. They are structured, rules-based, and often close to binary.

Claims either meet criteria or they do not.

Codes align with documentation or they do not.

Prior authorization data is complete or incomplete.

The ROI is clear. The risk is contained. The safe zone is comfortable.

This is important progress, but it is not where the deepest clinical value lies.

Medicine Is Full of LBW Moments

Clinical medicine is rarely binary. It is filled with borderline decisions, competing guidelines, incomplete data, and probabilistic reasoning. These are LBW moments.

A diagnosis may depend on trajectory rather than a single value.

A treatment choice may balance marginal benefit against side effects.

A lab result may be technically abnormal but clinically insignificant in context.

In these moments, we do not need AI to force a decision. We need AI to:

Process vast amounts of structured and unstructured data

Surface relevant guidelines and evidence

Model trajectories and risk

Reduce cognitive load

Highlight uncertainty rather than hide it

And when the case enters the Orange Zone, when uncertainty is high and the margin is thin, the system must respect Umpire’s Call. In healthcare, that means deferring to physician judgment.

AI should support clinical reasoning, not override it.

Moving Beyond Run-Outs

Transitioning from administrative automation to clinical decision support is significantly harder. The stakes are higher, the variability is greater, and the margin for error is narrower.

But the upside is also far greater:

Improved diagnostic accuracy

Better alignment with evidence-based guidelines

Reduced physician burnout

More consistent care delivery

Better patient outcomes

If we design AI systems that operate like DRS, we can move confidently into clinical workflows while preserving professional judgment and accountability.

This is not about replacing doctors. It is about building a Doctor’s Review System that enhances how decisions are made.

The Doctor’s Review System Philosophy

The next phase of AI in healthcare will not be about replacing human expertise. It will be about embedding structured review layers into complex clinical decisions.

Technology should:

Intervene where clarity is high

Illuminate uncertainty where complexity is high

Defer when judgment carries contextual weight

That is how trust is built.

If you are looking to move beyond the run-outs and focus on Generative AI for clinical workflows, I would love to connect. We are working on projects launching early next year that bring this Doctor’s Review System philosophy to life in real clinical environments.

Let’s connect.