Guardrails for AI Agents: A Mask Analogy for Safe AI

Over the last few days, I have been discussing a 7-layered structure to understand AI agents. The layer that generates the most confusion and questions is Layer 5: Guardrails.

Guardrails are the mechanisms designed to manage the unpredictable, non-deterministic nature of large language models. They ensure that AI agents operate within clearly defined ethical, legal, and operational boundaries. Without guardrails, even a highly capable agent can drift into unsafe, non-compliant, or irrelevant territory.

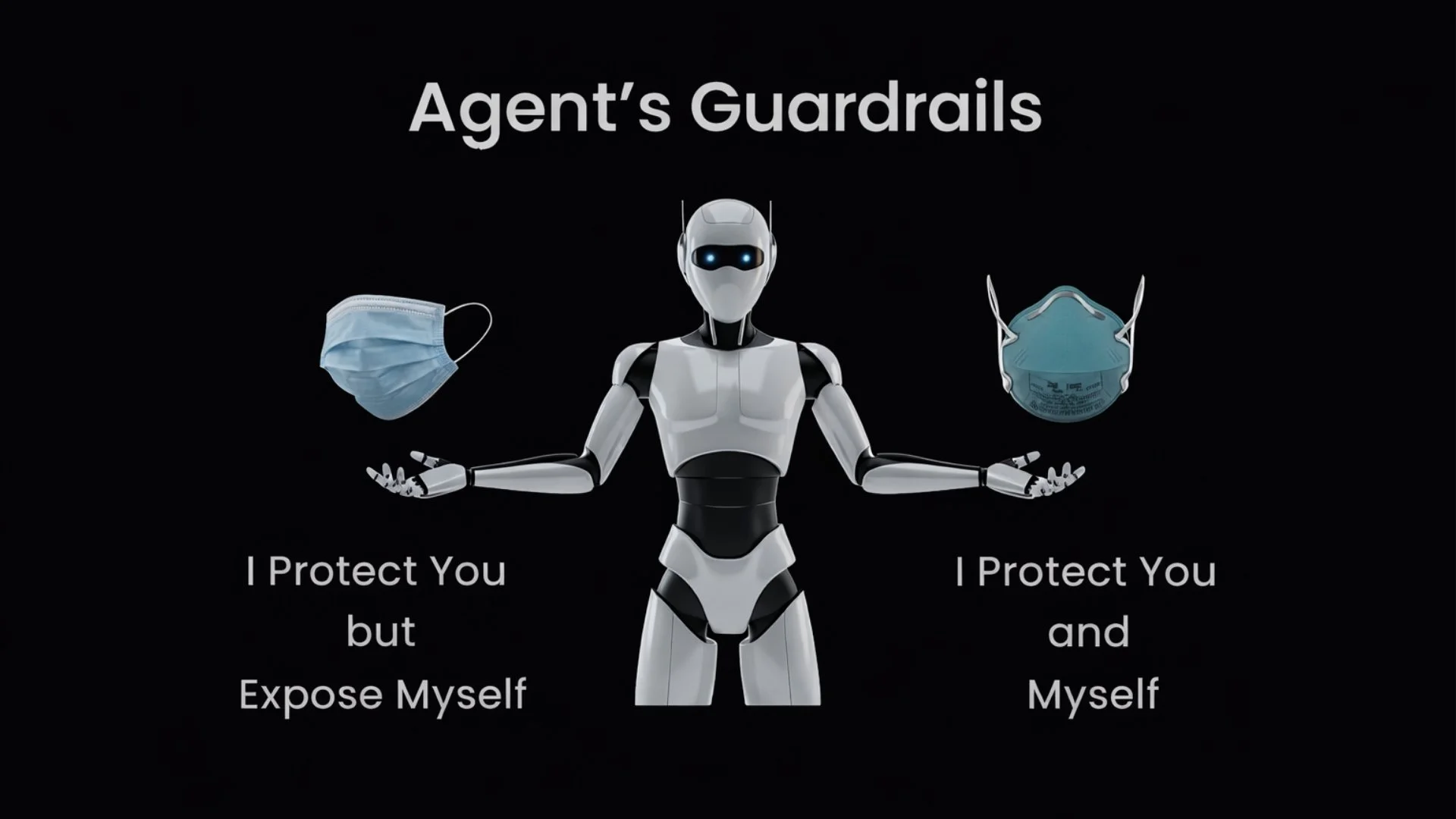

A critical principle is this: guardrails must protect the user from the agent, and they must also protect the agent from the user.

A simple way to understand these two core functions is through an analogy many of us became familiar with during COVID: masks.

1. Output Guardrails - I Protect You

Output guardrails are like surgical or cloth masks. Their primary function was to protect others by preventing the wearer from spreading germs. When everyone followed this practice, it protected the broader community.

In the AI context, output guardrails protect the user from the agent’s potential flaws. They operate on the model’s response before it is delivered to the user. Their purpose is to ensure that what leaves the system is safe, accurate, and aligned with policy.

Output guardrails typically address:

Reducing hallucinations or unsupported claims

Ensuring tone is appropriate, especially in sensitive domains such as healthcare or finance

Validating alignment with internal organizational or brand guidelines

Preventing the dissemination of inaccurate, unsafe, or non-compliant information

Grounding can reduce hallucinations, but output guardrails act as the final checkpoint. They are the last line of defense before the answer reaches the user.

2. Input Guardrails - I Protect You and Myself

Input guardrails are closer to N95 masks. They protect both the wearer and those around them.

These guardrails act on the user’s prompt before it reaches the LLM. Their goal is to filter, validate, and sometimes transform the input to ensure the system is not manipulated or exposed to unnecessary risk.

Input guardrails address risks such as:

Prompt injection attacks

Jailbreaking attempts designed to override system instructions

Malicious or adversarial inputs

Accidental submission of sensitive information, such as PHI

In regulated industries, this is especially important. A user might unintentionally paste protected health information into a system not configured to handle it. Input guardrails can detect and redact such data before it reaches the core model.

If output guardrails focus on what the agent says, input guardrails focus on what the agent hears.

How are Guardrails Implemented?

Guardrails are not a single feature or product. They represent a multi-layered strategy that spans architecture, policy, and runtime controls.

Common implementation patterns include:

Deterministic Business Rules

These are explicit rules embedded directly into operational layers of the agent. They may include keyword filters, word limits, structured output constraints, escalation triggers, or hard-coded compliance checks. Deterministic rules introduce predictability into otherwise probabilistic systems.

Reusable Modules

Guardrail logic can be abstracted into reusable internal APIs or external third-party services. For example, moderation services, PHI detection modules, or policy enforcement layers can operate independently of any single agent. This decoupling improves maintainability and scalability.

Agents as Guardrails

In multi-agent frameworks, one agent can act as a guardrail for another. For example, a specialized PHI detection agent, potentially based on a self-hosted model, can inspect and scrub prompts before forwarding them to a primary reasoning agent. Similarly, a validation agent can review outputs before release.

This layered approach allows guardrails to function as first-class architectural components rather than afterthoughts.

Guardrails are About Trust, Not Just Control

AI agents cannot be considered production-ready without robust guardrails. A weak guardrail strategy is like wearing the wrong mask. It creates a false sense of security while leaving critical vulnerabilities exposed.

True safety does not come from a single filter or rule. It comes from intentional design, layered controls, and continuous monitoring.

At Prompt Opinion, we focus on designing and embedding guardrails as first-class citizens within agent platforms. If you are building a production-grade agent system and want to think through guardrail architecture at depth, we would be glad to connect and explore how to implement these controls effectively.