Evaluating AI in Healthcare: Beyond the LLM

Most conversations about evaluating AI systems in healthcare focus almost entirely on evaluating large language models. Benchmarks, hallucination rates, accuracy scores, and prompt robustness dominate the discussion.

But in practice, we do not deploy LLMs in isolation. We deploy them through applications and agents.

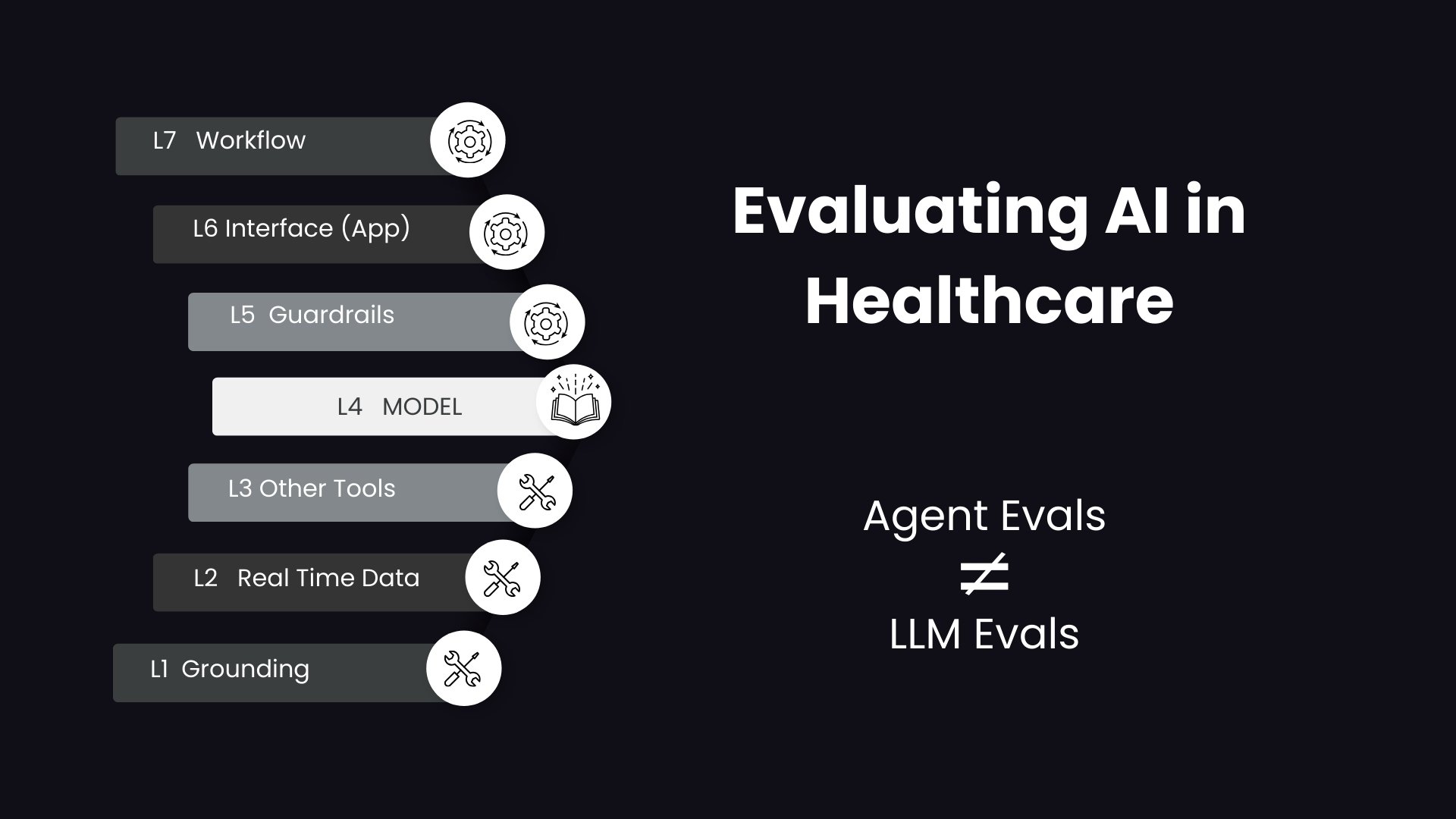

An LLM is only one layer in a much more complex stack. When AI operates in production, it interacts with data sources, tools, workflows, guardrails, orchestration layers, and user interfaces. Evaluating the model alone misses the system-level reality.

If we are serious about deploying AI safely in healthcare, we must evaluate the agent as it performs in the real world across all layers of the stack.

Broadly, this evaluation can be grouped into two categories: task-level outcome evaluation and component-level process verification.

1. Task-Level Evaluation: The Outcome Test

Task-level evaluation is essentially black-box testing. It focuses on the final outcome from the user’s perspective and asks a simple but critical question:

Did the agent achieve the goal?

Examples include:

Was the follow-up appointment scheduled correctly?

Did the prior authorization get submitted with complete documentation?

Did the triage workflow route the case to the appropriate clinician?

Were the references accurate and relevant?

At this level, we are less concerned with how the agent arrived at the answer and more concerned with whether it delivered the correct, usable result.

In many cases, this type of evaluation involves human users, especially when measuring usability, clinical relevance, or workflow fit. LLMs can also be used as evaluators to score outputs at scale, but real-world validation often requires user feedback and outcome tracking.

Outcome evaluation tells us whether the system works. It does not tell us why.

2. Component-Level Verification: The Process Audit

Component-level evaluation is white-box testing. It audits the internal method and focuses on how the agent utilizes its configured resources.

In healthcare, this layer is critical because correctness is not enough. Traceability, safety, and efficiency matter just as much.

Key questions include:

Did the agent select the most relevant tool for the task?

Did it correctly translate a vague user intent into structured arguments required by a tool?

Was the final response grounded and traceable to approved data sources?

Did safety layers effectively block PII or toxic inputs as intended?

Was the task completed with minimal latency, efficient context usage, and no redundant calls?

This is where structured automation becomes powerful. Standards such as Model Context Protocol support deterministic tool invocation, making it easier to verify how tools were called, what arguments were passed, and whether outputs remained within policy constraints.

Process verification ensures the system behaves predictably, not just occasionally correctly.

The Added Complexity of Multi-Agent Systems

As the ecosystem matures, evaluation becomes even more challenging. Model Context Protocol has emerged as a standard for implementing tool interactions. At the same time, Agent-to-Agent protocols are gaining traction as a way to structure multi-agent collaboration.

In many real-world healthcare scenarios, one orchestrating agent coordinates the work of several specialized agents. A clinical reasoning agent may call a documentation agent, which in turn queries a billing agent.

This introduces new evaluation questions:

Did the orchestrator delegate tasks appropriately?

Was context shared correctly between agents?

Did intermediate outputs introduce errors or drift?

Did cumulative latency remain acceptable?

Multi-agent systems add a new layer of orchestration risk that cannot be captured by evaluating a single LLM in isolation.

From Model Evaluation to System Evaluation

Evaluating AI in healthcare requires a shift in mindset. It is no longer sufficient to benchmark a model on a static dataset. We must evaluate the entire agentic system in production-like environments, measuring both outcomes and internal processes.

At Prompt Opinion, we are developing an Agent Evaluation Framework that incorporates these principles across outcome testing, process verification, tool auditing, and multi-agent orchestration.

If you are architecting complex use-case test suites, designing evaluation pipelines, or implementing structured MCP-based tooling, I would welcome the opportunity to connect and exchange perspectives on building robust evaluation strategies for healthcare AI.