Decoding AI Agents in Healthcare: The 7-Layer Stack

What exactly is an AI agent? Is it just a large language model? Is it ChatGPT wearing a lab coat?

These questions come up frequently. After extensive discussions, real-world use case exploration, and seeing these systems come to life in healthcare settings, it became clear that we needed a clearer mental model.

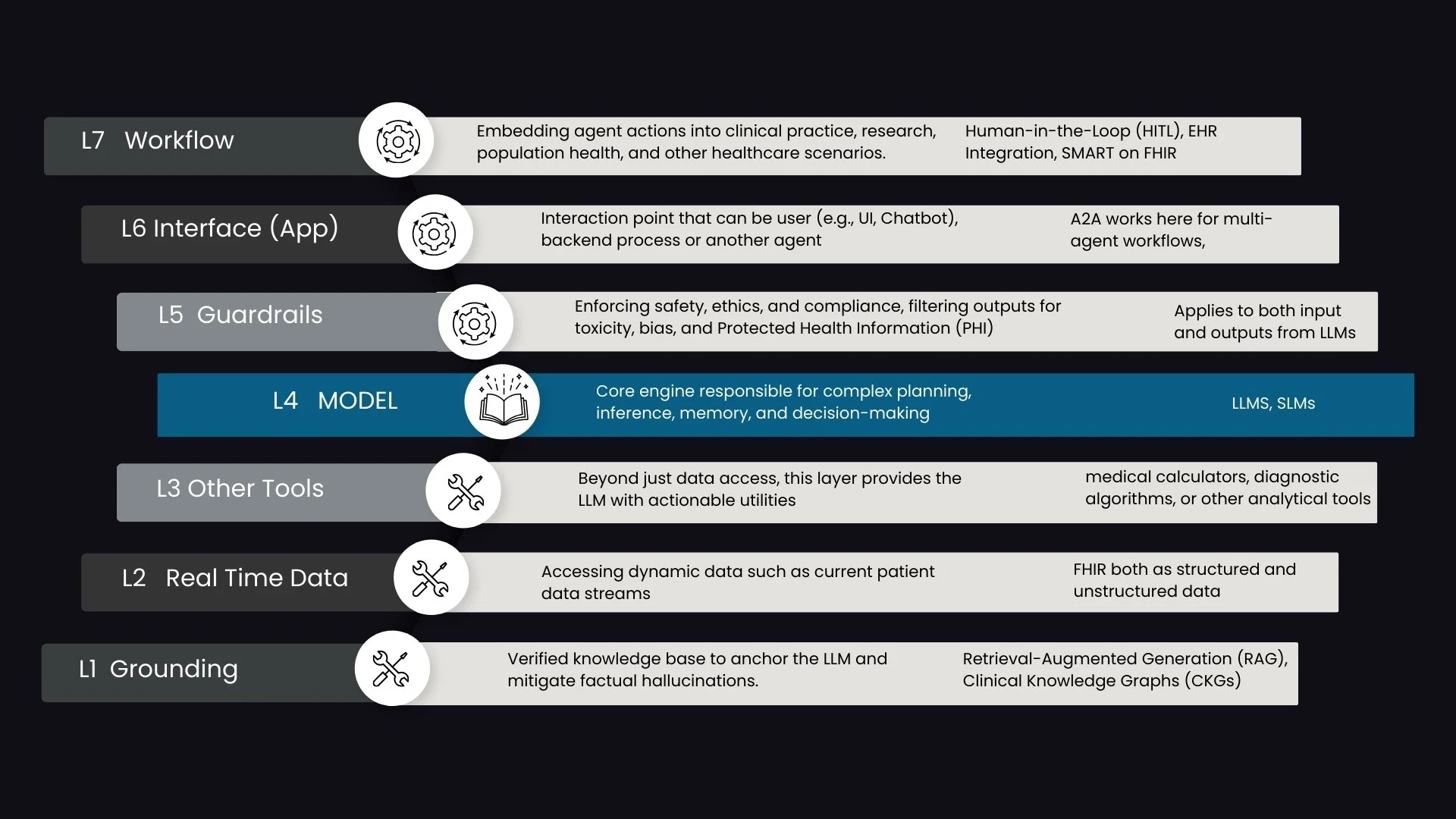

That is where the 7-layer stack comes in.

This framework is designed specifically to demystify AI agents in healthcare. It moves us beyond the simplistic idea that “LLM equals agent” and instead presents an architectural view of how intelligent systems actually function in practice.

At the center of the stack sits the Model, typically an LLM. But the model alone is not an agent. For an LLM to become autonomous and operational, it requires tools, governance, and workflow integration.

The Core: The Model (L4)

At the heart of the stack is the Model layer, most often a Large Language Model.

The model provides reasoning, language understanding, summarization, and contextual interpretation. It can synthesize evidence, interpret clinical notes, and respond to complex queries.

However, without access to tools and real-world systems, it remains a reasoning engine rather than an operational agent.

The Tooling Layers (L1, L2, L3)

For an LLM to act, it must be able to invoke tools. Tools can be thought of as functions or APIs that extend the model’s capabilities beyond text generation. The LLM determines which tool to use and when, typically by “calling” the tool through structured interactions.

L1: Grounding

This layer consists of verified, static knowledge bases. These may include clinical guidelines, curated research libraries, approved internal documents, or structured evidence repositories.

Grounding anchors the LLM in factual medical knowledge and mitigates hallucinations. It ensures that reasoning is tethered to a defined source of truth rather than relying solely on model memory.

L2: Real-Time Data

Healthcare decisions are contextual. Static knowledge alone is insufficient.

The Real-Time Data layer provides access to dynamic patient information from EHRs and other critical systems. This includes labs, vitals, medication lists, imaging reports, and longitudinal records.

By combining grounding with live patient data, the agent can reason within the appropriate clinical context.

L3: Other Tools

This layer includes actionable utilities that allow the agent to perform specific tasks.

Examples include:

Medical calculators

Diagnostic algorithms

Risk scoring systems

Documentation generators

Write-back capabilities into structured systems

These tools transform the LLM from a passive reasoning engine into an operational system capable of executing meaningful actions.

The Operational Layers (L5, L6, L7): Bringing Agents into Practice

The top layers focus on how agents interact with users, systems, and sometimes other agents. This is where the architecture moves from theory to real-world deployment.

L5: Guardrails

Guardrails ensure safety, compliance, and reliability. They include mechanisms for filtering outputs, mitigating bias, managing PHI, and enforcing policy constraints.

In healthcare, this layer is critical. It protects patients, clinicians, and institutions from unsafe or non-compliant outputs. Guardrails manage the non-deterministic nature of LLMs and create structured boundaries for autonomous behavior.

L6: Application or Interface

This is how the agent is exposed to the world.

Most commonly, we see conversational interfaces or chat-based experiences. However, the interface layer is broader than chat. Agents can be embedded within dashboards, workflow tools, or even exposed directly to other agents.

Agent-to-Agent communication operates at this layer. It allows specialized agents to collaborate, share context, and coordinate tasks in more complex scenarios.

L7: Workflow

The workflow layer embeds agents directly into clinical and operational processes.

This includes:

Integration with EHR systems

Human-in-the-Loop mechanisms for oversight

Escalation pathways

Minimizing disruption to existing workflows

Without workflow integration, an agent remains a standalone tool. With it, the agent becomes part of the care delivery infrastructure.

Standards That Enable the Stack

Model Context Protocol is one of the most common standards enabling the tooling layers. It structures how models interact with data sources and external tools.

Agent-to-Agent frameworks operate primarily at the interface layer. They orchestrate communication among specialized agents to support complex healthcare use cases.

Together, these standards help create modular, interoperable systems rather than tightly coupled, proprietary architectures.

Moving Beyond “LLM Equals Agent”

This 7-layer stack provides a practical framework for understanding AI agents in healthcare. It clarifies that an agent is not just a model, but a layered system that integrates reasoning, tools, safety, interface design, and workflow orchestration.

Understanding these layers is essential for building safe, scalable, and interoperable AI systems.

At Prompt Opinion, we have developed a no-code platform that enables teams to build agents using this stack. Over the coming weeks, we will explore each layer in depth, including hands-on demonstrations of how these components come together in real-world healthcare scenarios.

If you are interested in an early preview, or exploring how this stack can support your healthcare initiatives, we would be glad to connect.