When Do You Really Need AI Agents?

Finding the Agent Sweet Spot in Healthcare

There is a growing buzz that AI agents are the solution to every problem.

Let’s be clear. AI is not a single thing. It spans a wide spectrum of capabilities, from simple chat interfaces and predictive models to fully autonomous, multi-step agents that can reason, plan, and act.

The real question is not whether AI is useful. It is this:

When should you really start thinking about building agents?

Start with First Principles

A good technology should enable something that was previously impossible, or allow us to do something faster, better, or cheaper than humans can.

AI agents are powerful, but they are not universally necessary. If a deterministic workflow or a simple automation can solve the problem, adding an agent may only increase complexity.

The core superpower of agents comes from large language models. So it helps to ask a basic question: what are LLMs actually good at?

They are exceptionally good at:

Analyzing large volumes of data

Summarizing complex information

Reasoning across disparate sources

Identifying patterns in unstructured text

Handling contextual, nuanced queries

They outperform humans in speed and scale when processing information. That is their comparative advantage.

Why Healthcare Is an Ideal Environment

When you examine healthcare, it becomes clear why agents feel so relevant.

Healthcare sits at the intersection of three major challenges in clinical decision-making.

1. A Lot of Data

Medical knowledge is expanding rapidly. Estimates suggest that medical knowledge doubles every few years. Clinicians are expected to stay current with evolving evidence, guidelines, and treatment protocols.

At the same time, patient data is exploding. Beyond traditional EHR records, clinicians now contend with:

Wearable data

Remote monitoring data

Patient-reported outcomes

Imaging and lab results

Longitudinal care histories

The sheer volume exceeds what any individual can realistically process in real time.

2. Unstructured Data

Much of this data is unstructured. Clinical notes, imaging reports, discharge summaries, pathology results, and research articles are not neatly formatted into database tables.

Traditional rule-based systems struggle with this type of data. They are built for structured fields and predefined logic.

LLMs, however, are built to interpret and reason across unstructured text. This is where they shine.

3. Non-Deterministic Decision-Making

Medicine is not a simple if–else system. Clinical decisions are contextual, probabilistic, and often dependent on subtle factors.

Two patients with the same diagnosis may require different treatment plans. Guidelines inform decisions, but they rarely dictate them completely.

This non-deterministic environment is exactly where LLM-based agents add value. They can synthesize guidelines, patient history, and contextual nuance into structured insights that support human judgment.

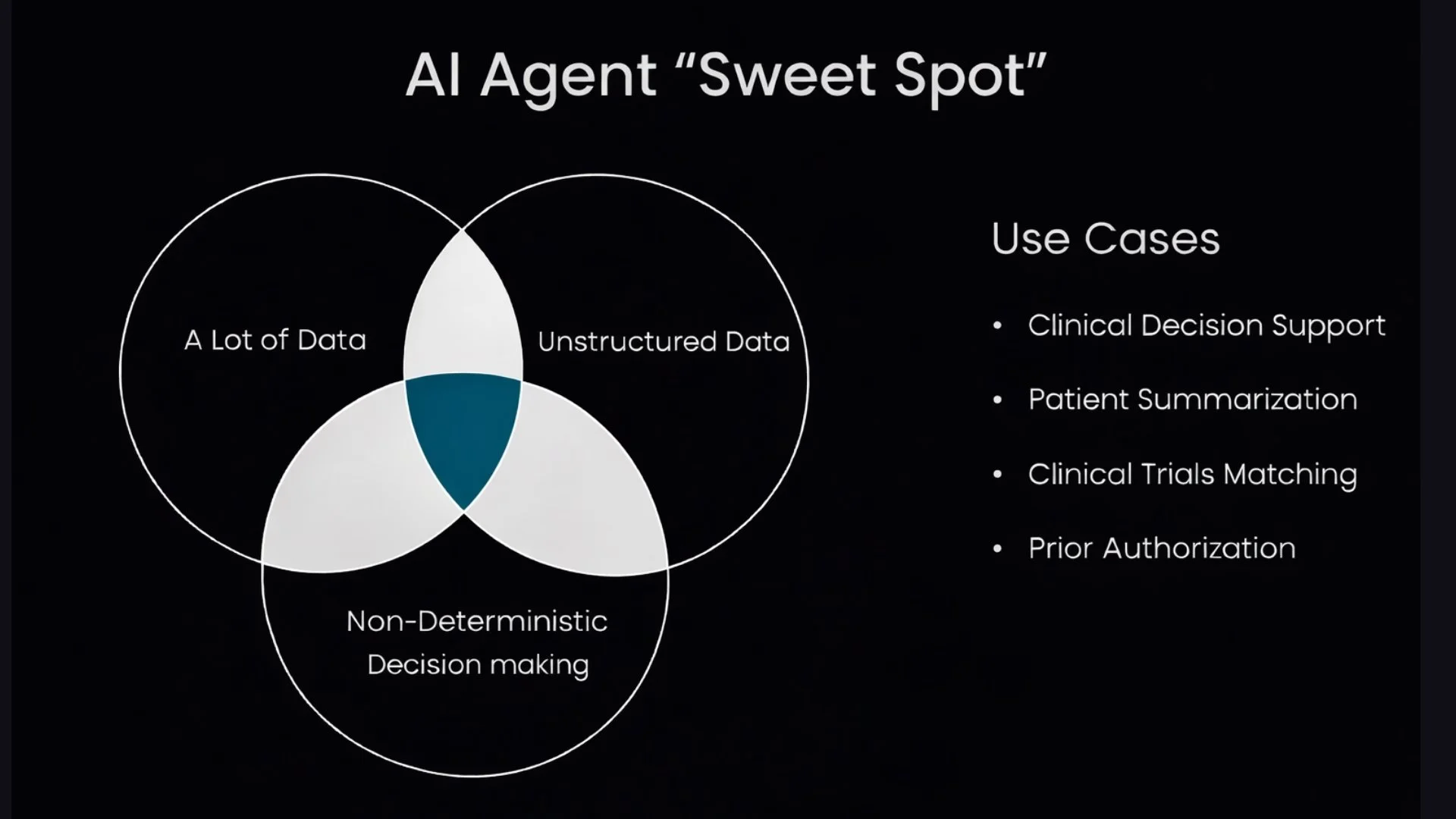

The Agent Sweet Spot

The true Agent Sweet Spot lies at the overlap of two or all three of these characteristics:

High data volume

Significant unstructured information

Non-deterministic decision-making

In healthcare, that overlap produces powerful use cases such as:

Clinical decision support

Patient summarization

Clinical trial matching

Prior authorization support

These are also the areas where traditional automation struggles the most. They require contextual understanding, flexible reasoning, and the ability to process heterogeneous data sources.

When a problem is structured, low-volume, and deterministic, a simple rules engine may be enough. When it sits in the overlapping zone of complexity, scale, and nuance, that is when agents begin to make sense.

Build Agents Where They Create Leverage

AI agents should not be deployed because they are fashionable. They should be deployed where they create leverage.

If the system must reason across evolving evidence, summarize unstructured patient data, and support nuanced decision-making at scale, agents can meaningfully augment human capability.

At Prompt Opinion, we have developed a framework to build agents that operate effectively across these clinical and operational scenarios. If you are exploring similar challenges or evaluating where AI agents fit within your workflows, I would be glad to connect and discuss how to identify your own agent sweet spot.