Let’s Talk About the Other Side of AI in Healthcare

In healthcare, AI is advancing on two powerful fronts.

The first is the intelligence layer. This includes models powering clinical decision support, predictive analytics, risk scoring, auto-documentation, and advanced analytics. It is the backend engine that drives insight.

The second is the interaction layer. Tools like ChatGPT have fundamentally reshaped how we expect to engage with software.

While backend intelligence continues to evolve rapidly, the shift that many clinicians are already feeling is happening at the interface level. We are no longer confined to buttons, dropdowns, and navigation trees. We are beginning to talk to software.

The expectation is changing. Clinicians want copilots, not just dashboards.

From Clicks to Conversations

Traditional healthcare software was built around structured interfaces. Forms, tabs, menus, and alerts defined the interaction model. Usability improvements meant reducing clicks or rearranging screens.

Conversational AI changes the paradigm. Instead of adapting to the software’s structure, users describe their intent in natural language. The system interprets that intent, retrieves relevant data, and responds in context.

This is not simply a UX trend. It represents a cognitive shift in how software aligns with human workflows.

However, conversational interfaces are only powerful if they are grounded in real data and embedded within real systems. A chatbot disconnected from clinical context is little more than a smart search box.

Why MCP Matters

This is where Model Context Protocol becomes important.

MCP enables LLM-based chat interfaces to securely access tools, models, and structured data in real time. Through MCP, a conversational interface can retrieve patient data from FHIR endpoints, invoke clinical calculators, trigger workflows, and return structured responses grounded in verified sources.

Instead of generating answers in isolation, the interface becomes connected to the clinical environment.

This transforms conversation into action.

An LLM can:

Retrieve relevant patient data

Apply evidence-based guidelines

Invoke workflow tools

Return traceable, contextual responses

The interaction layer is no longer separated from the intelligence layer. It becomes a unified experience.

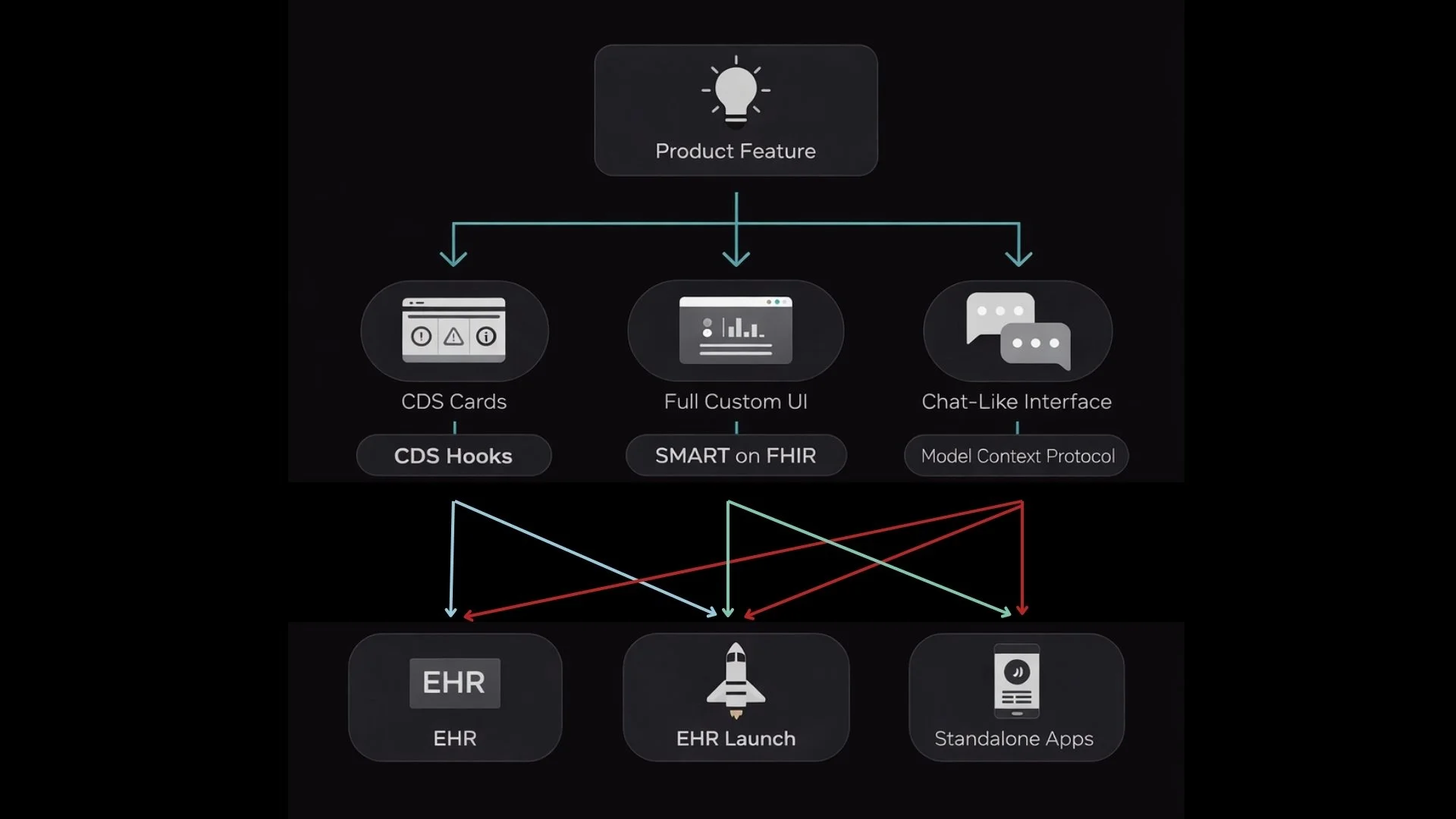

Will Everything Become “Just Chat”?

Probably not. Healthcare workflows are too diverse and too structured to be fully replaced by a single conversational interface. There will always be use cases where dashboards, structured forms, and specialized SMART on FHIR apps are more appropriate.

What we are moving toward is a blended landscape.

In this landscape:

EHR-native interfaces manage structured documentation and transactional workflows

SMART on FHIR apps provide focused, specialized capabilities

Conversational copilots layer on top to synthesize context, reduce cognitive load, and orchestrate tasks

The future is not chat versus interface. It is orchestration across modalities.

The key is choosing the right interface for the right task.

The Evolving Interface Layer

The interaction layer in healthcare is becoming as strategically important as the intelligence layer itself. The models may power the insights, but the interface determines adoption.

As standards like FHIR and MCP mature, the boundary between conversation and workflow will continue to blur. Copilots will not replace existing systems. They will sit alongside them, augmenting and coordinating them.

We recently unpacked these ideas in greater depth in our newsletter, exploring how conversational interfaces, MCP, and clinical decision support are converging.

If you are working at the intersection of MCP, FHIR, and clinical AI, we would welcome the opportunity to connect and exchange perspectives. The evolution of the interface layer is just beginning.